|

by John Pavlus

a future full

of humanoid robots. vast improvements in humanoid robots, but graduating to widespread use might require going back to the fundamentals...

The last time I covered the science of humanoid robots, the state of the art looked downright Orwellian - by which I mean, "four legs good, two legs bad."

It was 2015... Boston Dynamics' first "Spot" quadruped had taken YouTube by storm, confidently trotting up stairs and recovering from vicious kicks.

Also popular at the time:

I felt sorrier for those tottering metal lobsters than I ever did for Spot. Bipedal locomotion is hard...

Cut to now. Humanoids have apparently become so advanced that Tesla is mothballing some electric car models to make way for its Optimus humanoid robot, and start-ups are preselling android butlers with a straight face.

Hype aside, I was genuinely curious:

Sure, "AI" happened (that is, in the post-ChatGPT sense). I certainly hadn't overlooked that.

But I had no idea what it possibly had to do with robots not falling down anymore.

In philosophy, "qualia" refers to the subjective qualities of our experience: what it's like for Alice to see blue or for Bob to feel delighted. Qualia are "the ways things seem to us," as the late philosopher Daniel Dennett put it. In these essays, our columnists follow their curiosity, and explore important but not necessarily answerable scientific questions.

For a reality check, I called Scott Kuindersma, who recently left Boston Dynamics after many years there, and Jonathan Hurst of Agility Robotics.

Surely today's robotic bipedal marvels can ascend a few stairs and open a door without breaking a nonexistent sweat, something they famously struggled with a decade ago.

Don't get me wrong: I don't believe that some sock-faced robot zombie is close to taking over my household chores.

But stairs and doors? It's 2026.

Why are humanoids still this... hard?

Fast, Cheap, and Mostly Under Control

To be fair, a paradigm shift did happen. Three, actually...

First, deep learning - neural networks running on fast GPU chips - turbocharged computer vision and reinforcement learning, which radically improved the speed and sophistication with which robots could perceive and interact with their environments.

Then in 2016, a revolution in actuation (roboticist-speak for "making parts move") began:

Most recently came the large language models.

Adapting chatbot technology for robots, it turns out, lets them autonomously plan and perform multistep tasks, such as coring an apple or emptying a dishwasher (in demos, at least).

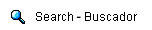

These advances created the night-and-day difference between "Running Man," the hulking, halting version of Atlas that won second place in 2015's DARPA Robotics Challenge, and the svelte, smooth Atlas recently shown breakdancing and autonomously moving irregular items from one bin to another (while dealing with interference from a hockey stick–wielding human).

The Atlas robot from

Boston Dynamics shows off in a video from early 2025.

That fluid gait, for example, comes from deep reinforcement learning.

Roboticists once coordinated each movement with various hand-engineered algorithms, using equations to model the (simplified) physics of the robot.

This process teaches the network a "policy" for how to translate feedback from its environment into actions.

There's no longer any need to model a robot's leg as a linear inverted pendulum, for example.

This strategy was aided by the proprioceptive actuators pioneered by Sangbae Kim of the Massachusetts Institute of Technology in his Cheetah series of robots.

...every time it fails to perfectly execute a policy in the real world - or encounters an obstacle or disturbance. Kim's actuators got around the problem with controllable "compliance," or flexible springiness.

Over the past decade, they've gotten cheaper and more widely accessible.

If reinforcement learning and compliant actuation were gifts to humanoid robotics, multimodal AI put a bow on it.

In 2023, Google DeepMind introduced "vision-language-action" (VLA) models, which can take in video and natural language and produce movement commands as outputs.

In a stroke, VLAs united previously disparate approaches to robotic perception, planning, and control into one general-purpose pipeline.

So why don't they add up to humanoids being scientifically "solved" - at least in principle?

May the Force Be With You

Pulkit Agrawal, who studies robot learning at the appropriately named Improbable AI Lab at MIT, had an answer when I reached him there last month.

He wasn't referring to cosmic matters like general relativity or quantum gravity, nor to the virtual "world models" that currently excite leading AI researchers such as Yann LeCun.

Instead, Agrawal is talking about. Mastering something a high school science student ought to be familiar with:

Press images of the Neo from 1X (above) and Tesla's Optimus (below)

imagine a future of

humanoid helpers.

The whole point of the humanoid form factor, after all, is to deliver what Kim calls "multipurpose mobile manipulation," or the ability to move almost anywhere (including on stairs and through doors) and handle almost anything (from unloading pallets to screwing in light bulbs), without hurting anyone in the process.

In short, what we do every day.

Force control is simple in principle.

Roboticists have known how to make this happen for more than 40 years:

This feedback can be driven by force sensors built into the robot's joints, but the catch is that the classical approaches require a lot of knowledge about the robot, environment, and task in order to work, he further explained.

That approach to controlling force works great for industrial robots with specific tasks to perform, and it even helped with humanoid locomotion.

But it was impossible to generalize.

Not only were they designed to absorb unexpected impacts without damage, they were also very "transparent," which meant that the motor converted electrical current into a proportional amount of force (and vice versa) with relatively little error.

In essence, the motor itself became a force sensor, which meant,

As reinforcement learning eclipsed manual programming as a way of controlling humanoid movement, "classic" force control was not forgotten.

It just got abstracted and delegated, in a way, to both hardware and AI.

Those neural networks are learning generalized policies that control the positions of a robot's body parts.

Force regulation often happens only indirectly in simulation training, or sometimes as a side effect when learned from video or human input.

But those methods don't explicitly teach the physics of force - at least, not yet.

DeepMind's Parada acknowledged that the VLA models basically just learn to move between specifically defined poses - and this approach goes a long way.

In 2015, the most advanced humanoid robots in the world competed at the DARPA Robotics Challenge Finals. The tech has since improved. DARPA

But only so far.

As long as robot bodies remain relatively stiff and heavy compared to ours,

Picture a regular egg and another made of solid steel:

Imagine trying to move a chair with your car, Agrawal said:

That's part of why Atlas moves like molasses while grasping auto parts but glides like a gymnast when it's not touching anything except the floor.

But he, Hurst, and Parada all readily grant that clever force workarounds won't deliver the all-purpose mobile dexterity our robot butlers need.

Even if today's VLA-brained bots, refined by reinforcement learning, had "an internet-sized" amount of positional data to train on,

Humanoids, for the most part, still don't, which means they have not mastered physics - at least not in the way we have, from a lifetime of interacting with our environments through the extraordinarily complex musculoskeletal and nervous systems gifted to us by evolution.

That's a big reason why even doors and stairs aren't fully "solved" for present-day humanoids.

Get Smart (or Start Over)?

So how do we get over the wall, scientifically speaking?

Most of the experts I asked suspect that it will take a new blend of hardware and software advances.

Tactile sensors for better data collection and robot hands that combine high power, compliance, and transparency with low inertia would accomplish a lot, and nobody believes that true material breakthroughs (like replacing motors with artificial muscles) will be necessary.

Finding more intelligent ways to control it is key.

The Digit robot from Agility Robotics demonstrates fine motor control

in an unstructured

environment.

When asked how to achieve that, everyone had a different answer.

Agrawal is studying how to combine force control with reinforcement learning by having humanoids learn compliant behaviors in simulation, instead of moving between rigidly defined positions.

Tedrake, whose work on "large behavior models" (a cousin of VLAs) produced the apple-coring robot demo, recently argued in Science Robotics for a ChatGPT-style regime of,

Frank Park, who wrote the book on modern robotics - literally, the textbook titled Modern Robotics - believes that current AI approaches should be torn down to the studs and replaced with ones that make physics fundamentals (such as force and acceleration) learnable at a foundational level.

In all these conversations, what struck me most wasn't the debates about which kinds of sensors, data, or AI architecture could "solve" humanoid robotics.

Rather, it was the sense that the scientific ethos of the field had changed.

Hurst, who had just spun Agility Robotics out of his Oregon State University lab when we first spoke, put a fine point on it.

(Editor's note: Gill Pratt recalled this conversation differently. He acknowledged that machine learning could allow performance beyond our formal understanding, but not that this was a cause for worry.)

Tedrake agreed but said that it's hardly the first time we've taken scientific and engineering leaps without a firm grip on the fundamentals.

So when will humanoids be solved?

|