|

by Eric Pfeiffer

from

Yahoo!News Website

The crazy man walking down the city street holding a sign that reads “The end is near” might just have a point.

A team of mathematicians, philosophers and scientists at Oxford University’s Future of Humanity Institute say there is ever-increasing evidence that the human race’s reliance on technology could, in fact, lead to its demise.

The group has a forthcoming paper entitled “Existential Risk Prevention as the Most Important Task for Humanity,” arguing that we face a real risk to our own existence. And not a slow demise in some distant, theoretical future.

The end could come as soon as the next century.

There’s something about the end of the world that we just can’t shake.

Even with the paranoia of 2012 Mayan prophecies behind us, people still remain fascinated by the potential for an extinction-level event. And popular culture is happy to indulge in our anxiety.

This year alone, two major comedy films are set to debut (“The World’s End” and “This is the End”), which take a humorous look at the end-of-the-world scenarios.

For its part, NASA released a series of answers in 2012 to frequently asked questions about the 'end of the world.'

Interestingly, Nick Bostrom writes that well-known threats, such as asteroids, supervolcanic eruptions and earthquakes are not likely to threaten humanity in the near future.

Even a nuclear explosion isn’t likely to wipe out the entire population; enough people could survive to rebuild society.

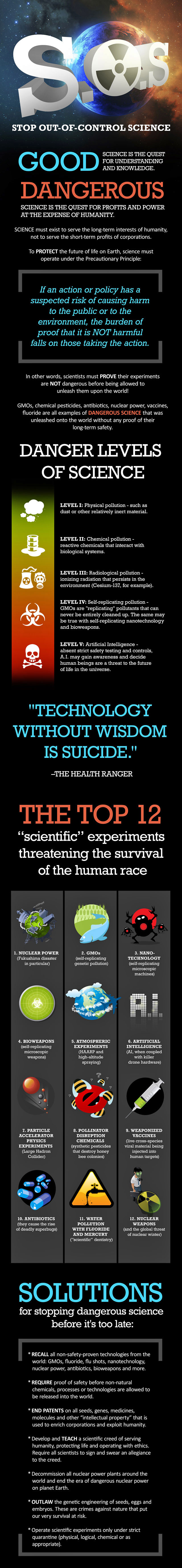

Instead, it’s the unknown factors behind innovative technologies that Bostrom says pose the greatest risk going forward.

In other words, ...could become our own worst enemy, if they aren’t already, with Bostrom calling them,

However, it’s not all bad news.

Bostrom notes that while a lack of understanding surrounding new technology posts huge risks, it does not necessarily equate to our downfall.

-

This Time for Real -

from

MSN-News Website

The end of humanity?

A skeleton mask at a 'Day of the Dead' event in Mexico City.

As one renowned physicist urges us all to go into space to save ourselves, others are actually agreeing that humans may become extinct.

This isn’t media hype, says a team of deep-reaching philosophers (naturally), scientists and mathematicians at Oxford University’s Institute for the Future of Humanity, who are finding more and more evidence for this conclusion.

In a forthcoming paper, "Existential Risk Prevention as the Most Important Task for Humanity," Bostrom and his mission crew explore today’s risk of premature human extinction.

This could be the final century for humanity, Bostrom told the BBC, because our advancement in technology has led us to a point in which technology is far more sophisticated than we are.

This is not news to science fiction authors and Hollywood directors - Isaac Asimov’s 1950 novel "I, Robot," Danny Boyle’s 2002 box-office hit, "28 Days Later," to name barely a few - who tend to think extremely yet presciently about a world in which our messing with technology could send us into extinction.

Now Bostrom and contemporaries are removing the fiction from the predictions and warning that we might be almost there.

Legendary physicist and cosmologist Stephen Hawking, who has been talking about our vulnerability for years, is still on a mission to wake us up.

Hawking, who is on the board of the new Center for the Study of Existential Risk at Cambridge University, has always said that it’s our curiosity that might save us.

Synthetic biology, nanotechnology and machine intelligence are areas that have contributed greatly to our quality of life, but also greatly threaten our future life.

We face nuclear destruction and, as we’ve been hearing for years, environmental Armageddon.

O'Heigeartaigh points out a conundrum in the Hawking/Carl Sagan theory that knowledge is power.

The more we investigate and experiment with technology, the lesser control we seem to have.

Can knowledge save us? Maybe. Maybe not.

What does this mean?

It means we might not even know how high the risk of premature extinction to humanity is.

This uncertainty is precisely the motivation behind such research.

That is, events that could determine whether or not we’ll be around in the next century.

|