|

by Geoffrey Ingersoll

from

BusinessInsider Website

Not just in terms of structure, but more importantly in terms of brains. Bees are the next-gen weapons, for delivery of military payloads, reconnaissance and surveillance.

Jesse Emspak, an analyst for Discovery, reports that researchers in the U.K. are attempting to build a "bee brain" in order to fill out requirements of what would be the new age of drone warfare.

The important distinction is that these new drones are not autonomous the way we traditionally think: preprogrammed routes and missions executed or aborted based on input, stipulations coded by the drone operators.

No, these drones actually "think."

From Emspak:

Called the "Green Brain," the software

model will focus on how a bee sees and smells. With that, a robotic

bee could be built that actually behaves like a real bee, rather

than just flying on a pre-programmed path and carrying out

instructions. James Marshall, a computer scientist at the University of Sheffield who is leading the three-year project, told Emspak,

Emspak said that NVIDIA, a graphics card company most famous for video games, will provide the computing power necessary to run "simulations" which try to mimic a bee's brain.

Once they've worked out all the kinks, then the NVIDIA cards can "transmit" the bee-brain program simulation out to the pieces of hardware in the field (since "it isn't yet possible to cram that much computing power into a small space").

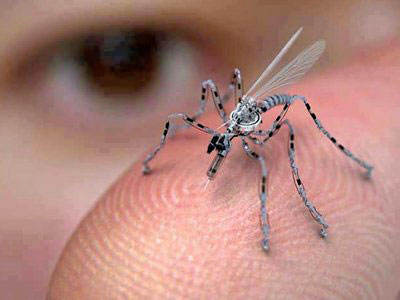

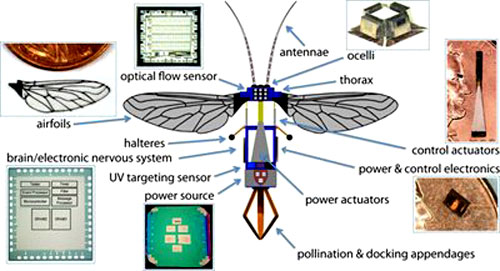

The hardware side of things is where Harvard University researchers come into play. Their RoboBees program is deadset on enhancing the presence of biobees in terms of crop pollination, or so they hope.

The aims of the program, from their site:

Funny to note that their mission goals state military as fourth on the list.

Now Harvard only gets about a one percent of the Pentagon funding that MIT gets, but it doesn't take a genius to figure out that farmers aren't going to be the first in line for orders of this RoboBee hardware.

And it doesn't take removal of a tinfoil hat to figure out that "autonomously pollinating a field of crops" means the RoboBees are designed with carrying payloads in mind.

Here's a pretty cool tidbit from the site:

[A mission goal is to] foster novel methods for designing and building an electronic surrogate nervous system able to deftly sense and adapt to changing environments; and advance work on the construction of small-scale flying mechanical devices.

The electronic surrogate nervous system means that the RoboBee will have, get this, senses.

That's one of the main goals of both programs. The U.K. researchers want to build a brain that can process sense information and make decisions based on that information, and the fellas in U.S. are building the sense hardware that would transmit that information back to the NVIDIA brain.

So the RoboBees can see, smell, and feel their environment.

|