|

from

ExtremeTech Website

There's been a lot of talk about Moore's Law this month, now that it's 50 years old, and whether it can continue in its current state or need additional adjustments.

Either way, at some point, the law is going to break down - probably within the next 10 years - as there's only so far we can shrink the transistors on a chip. Quantum computing is often considered one of the most logical successors to traditional computing.

If pulled off, it could spur innovation across many fields, from sorting through tremendous Big Data stores of unstructured information - which will be key in making discoveries - to designing super materials, new encryption methods, and drug compounds without trial-and-error lab testing.

For all of this to happen, though, someone has to build a working quantum computer. And that hasn't happened yet, arguably aside from that giant (and controversial) D-Wave machine. We're a big step closer now, though.

IBM researchers, for the first time, have figured out how to detect and measure both bit-flip and phase-flip quantum errors simultaneously.

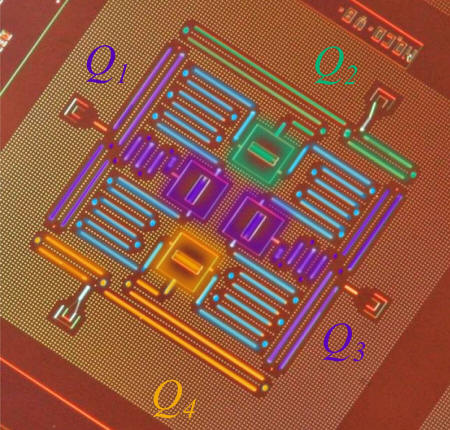

They also outlined a new, square quantum bit circuit design that could scale to much larger dimensions.

So what exactly is happening here?

Traditional computers normally understand what we refer to as bits, which can have just two values: 1 or 0. The bit is a symbol for two states that could be high or low voltage, or something like an on or off switch.

A qubit, or quantum bit, could hold both values at the same time as well, which is called a superposition - 0+1 - with both states in a phase relationship with each other.

This ability makes a quantum computer to be, at least in theory, much, much faster than a regular computer.

The problem is, a quantum computer won't work until you remove what's called quantum decoherence, or errors in calculations thanks to heat, defects, or electromagnetic radiation. Qubits are extremely delicate; simply measuring one could change its state. You could have a bit-flip error, which simply means the opposite state (1 instead of 0, for example).

And you could have a phase-flip error, which is an error in the sign of the superposition state.

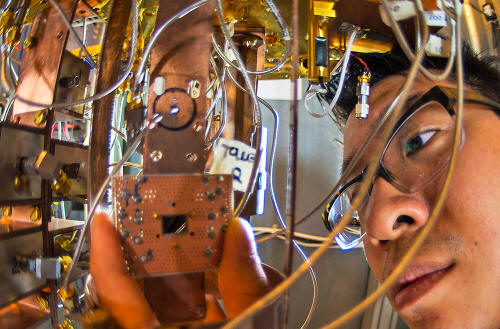

So quantum error correction is a necessary part of any large-scale reliable quantum computer design. But with previous concepts, you could only detect one or the other at the same time. IBM's solution is a quantum bit circuit that's based on a square lattice of four supercooled, superconducting qubits, placed on a chip that's roughly a quarter inch square in size.

The square shape is key to working quantum error correction, as former linear designs didn't allow for it. And the shape also lets you scale it by adding more qubits.

The two IBM breakthroughs are detailed in the April 29 issue of the journal Nature Communications.

The next step is to design and manufacture "a handful of superconducting qubits" reliably and repeatedly, with low error rates. Once that's done, then we could very well be on the way to a full-blown quantum computer.

And if one could be built with just 50 quantum bits (qubits), instead of four, according to IBM,

|